docker部署大模型ollama,无法使用GPU

容器部署大模型,无法调用GPU

·

最近再部署大模型玩

1.问题出现

docker版本如下

创建文件docker-compose.yml文件如下

name: 'ollama'

services:

ollama:

#restart: always

image: ollama/ollama

container_name: ollama13

runtime: nvidia

environment:

- TZ=Asia/Shanghai

- NVIDIA_VISIBLE_DEVICES=all

networks:

- ai-tier

ports:

- "11745:11434"

volumes:

- ./data:/root/.ollama

networks:

ai-tier:

name: ai-tier

driver: bridge启动容器

docker compose up -d报错:no compatible GPUs were discovered

no nvidia devices detected by library /usr/lib/x86 64-linux-gnu/libcuda.so.550.135

2.测试宿主机

curl -fsSL https://ollama.com/install.sh | sh

# 下载完成之后执行

ollama run llama3.2在宿主机启动容器测试,可以正常调动GPU,说明驱动没有问题

3. 排查问题

测试能否调用GPU

docker run --rm --gpus all nvidia/cuda:12.0.1-runtime-ubuntu22.04 nvidia-smi[root@worker1 ~]# docker run -it --rm --gpus all nvidia/cuda:12.4.0-base-ubuntu22.04 nvidia-smi

Unable to find image 'nvidia/cuda:12.4.0-base-ubuntu22.04' locally

12.4.0-base-ubuntu22.04: Pulling from nvidia/cuda

bccd10f490ab: Pull complete

edd1dba56169: Pull complete

e06eb1b5c4cc: Pull complete

7f308a765276: Pull complete

3af11d09e9cd: Pull complete

Digest: sha256:80d4d9ac041242f6ae5d05f9be262b3374e0e0b8bb5a49c6c3e94e192cde4a44

Status: Downloaded newer image for nvidia/cuda:12.4.0-base-ubuntu22.04

Failed to initialize NVML: Unknown Error

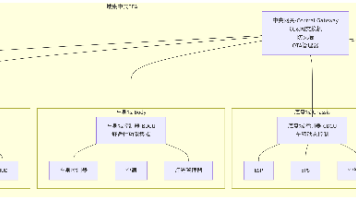

根据报错

修改配置文件

vim /etc/nvidia-container-runtime/config.toml

将图中no-cgroups=true改成

no-cgroups=false此参数对任务使用的资源(内存,CPU,磁盘等资源)总额进行限制

修改完成后,重启docker服务

systemctl restart docker4.验证是否解决

docker run --rm --gpus all nvidia/cuda:12.0.1-runtime-ubuntu22.04 nvidia-smi

至此问题解决

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)